In contrast, if we have to predict the temperature of a city, it would be a regression problem as the temperature can be said to have continuous values such as 40 degrees, 40.1 degrees and so on. In simple terms, the classification problem can be that given a photo of an animal, we try to classify it as a dog or a cat (or some other animal). While developing the algorithms for machine learning, we realised that we could roughly put machine learning problems in two data sets, classification and regression. All this was great and all, but as our understanding increased, so did our programs, until we realised that for certain problem statements, there were far too many parameters to program.Īnd then some smart individual said that we should just give the computer (machine) both the problem and the solution for a sample set and then let the machine learn. Learning the basics of machine learning is the first step to mastering XGBoost.Įarlier, we used to code a certain logic and then give the input to the computer program. XGBoost is a special tool in machine learning that's exceptionally good at making accurate predictions, even for complex problems. It's like a team effort where each member makes the group better. The right limit is chosen using hyperparameter tuning. This process continues until all data is correctly predicted or a limit on learners is reached. The second classifier focuses on fixing those errors. It makes some mistakes (shown with yellow and blue backgrounds), and those mistakes get extra attention. In the example, the first classifier gets the training data. Think of it as a relay race where the baton is improving with each handoff. Each learner tries to fix the mistakes made by the previous one. Sequential ensemble methods, known as "boosting," work like a team of learners. Now, let us learn about this as a beginner who doesn’t know much about machine learning. The optimal maximum number of classifier models to train can be determined using hyperparameter tuning. The classifier models can be added until all the items in the training dataset are predicted correctly or a maximum number of classifier models are added. This process continues and we have a combined final classifier which predicts all the data points correctly.

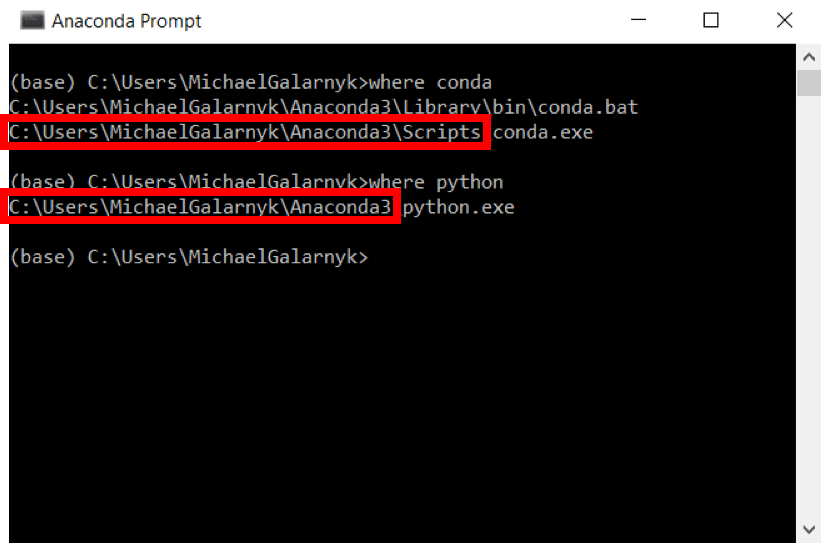

But classifier 2 also makes some other errors. The classifier 2 correctly predicts the two hyphens which classifier 1 was not able to predict. The weights (as shown in the updated datasets) of these incorrectly predicted data points are increased (to a certain extent) and sent to the next classifier. The classifier 1 model incorrectly predicts two hyphens and one plus. The yellow background indicates that the classifier predicted hyphen, and the sea green background indicates that it predicted plus. In the above image example, the train dataset is passed to the classifier 1. The first model is built on training data, the second model improves the first model, the third model improves the second, and so on.

The sequential ensemble methods, also known as “boosting”, creates a sequence of models that attempts to correct the mistakes of the models before them in the sequence.

Tianqi Chen, the mastermind behind XGBoost, emphasised its effectiveness in managing overfitting through a more regulated model formulation, resulting in superior performance. However, its name perfectly aligns with its purpose – supercharging the performance of a standard gradient boosting model. XGBoost, short for "eXtreme Gradient Boosting," is rooted in gradient boosting, and the name certainly has an exciting ring to it, almost like a high-performance sports car rather than a machine learning model. Tips to overcome the challenges of XGBoost model.Challenges of using XGBoost model in trading.How to use the XGBoost model for trading?.XGBoost…!!!! Often considered a miraculous tool embraced by machine learning enthusiasts and competition champions, XGBoost was designed to enhance computational speed and optimise machine learning model’s performance. Updated by Chainika Thakar (Originally written by Ishan Shah and compiled by Rekhit Pachanekar)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed